Google Ads Quality Score in 2026: What Actually Moves the Needle

Stop overpaying for clicks. Learn what really moves the Google Ads Quality Score in 2026, how each component is weighted and how to cut your CPC by 50%.

In this article

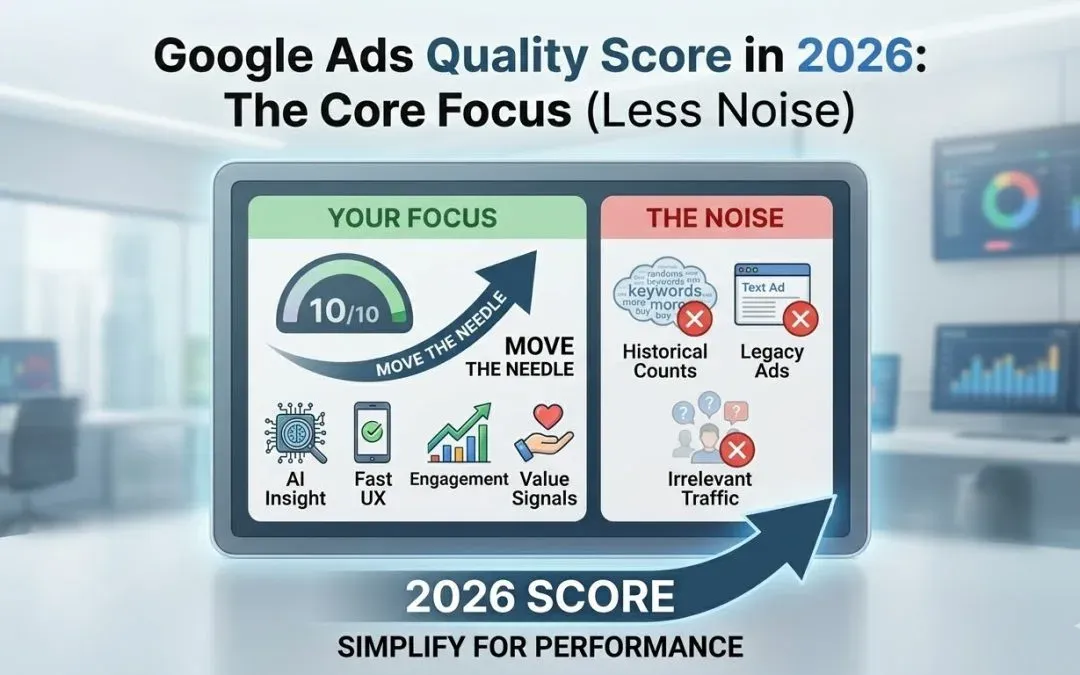

Most advertisers fall into one of two camps with Google Ads Quality Score. Either they obsess over it, chasing a 10/10 on every keyword like it’s a badge of honor. Or they dismiss it entirely, assuming Smart Bidding has made it irrelevant. Both positions cost money.

Here’s what matters: a keyword at Quality Score 1-3 can cost up to 400% more per click than the QS 5 baseline. A keyword at QS 10 unlocks up to a 50% CPC discount. I’ve seen this play out in real accounts repeatedly: an advertiser struggling with a £40 CPC drops to £22 after fixing landing page experience and tightening ad group themes. Same keyword, same bids. The Quality Score was the bottleneck the whole time. If you want context on how this fits into broader campaign structure, the Google Shopping campaign structure guide covers how budget allocation and architecture interact with quality signals.

Key takeaways

- A Quality Score of 1-3 costs up to 400% more per click than the QS 5 baseline; a score of 10 unlocks up to a 50% CPC discount (Adalysis practitioner research, directional estimates).

- The three components by estimated weight: Expected CTR (~39%), Landing Page Experience (~39%), Ad Relevance (~22%) — landing pages are the most underinvested area (Adalysis, 2024).

- The average Quality Score across 15,666 Google Ads accounts sits at 5-6; a score of 7 puts you ahead of most advertisers (WordStream, 2025).

- Quality Score is a diagnostic tool, not an auction input — Google has confirmed it’s a lagging indicator of real-time quality signals used at auction (Google Ads Help, 2024).

Contents

- What Quality Score Actually Is (And What It Isn’t)

- The Three Components and What Actually Moves Them

- Quality Score in Automated Campaigns: Smart Bidding and Performance Max

- Industry Benchmarks: What a Good Quality Score Actually Looks Like

- A Practical Quality Score Diagnostic: Run This on Your Account Today

- The Score Follows the Work

- Frequently Asked Questions

What Quality Score actually is (and what it isn’t)

Quality Score is a 1-10 diagnostic score assigned at keyword level in Search campaigns. Google calculates it based on three components: Expected Click-Through Rate, Ad Relevance, and Landing Page Experience. A 5 is average. A 7 is genuinely good. A 10 is rare and, in most cases, not worth pursuing at the expense of everything else.

The 1-10 score is not what Google uses at auction. Google has confirmed explicitly that Quality Score is a diagnostic tool, not a direct auction input. At every auction, Google calculates a real-time quality estimate based on the actual query, device, location, time of day, and dozens of other signals. The 1-10 score in the interface is a lagging snapshot based on historical impressions for exact searches of that keyword.

This distinction changes how you respond to it. If your Quality Score drops overnight from 6 to 5, don’t rewrite every ad. The 1-10 score lags by days. You’re looking at the reflection of something that already happened.

What Quality Score is not: a KPI to optimize in isolation, a ranking factor in Performance Max, or a reliable proxy for overall account health. I’ve audited accounts with average Quality Scores of 7 that were structurally broken, and accounts with an average of 5 generating excellent returns.

What it is: a direct input into Ad Rank, which determines both auction position and actual CPC. Better Quality Score means lower CPC for the same position. That’s the only reason it matters, and it’s reason enough.

How Quality Score moves your CPC

The CPC impact is neither linear nor symmetrical. The larger gains sit in the upper range: moving from 7 to 8 does more for you than moving from 5 to 6. The figures below come from Adalysis’s practitioner research, derived from reverse-engineering historical auction data. They’re directional estimates, not precise Google-published figures.

| Quality Score | CPC Impact vs. Baseline (QS 5) |

|---|---|

| 1 | +400% |

| 2 | +150% |

| 3 | +67% |

| 4 | +25% |

| 5 | Baseline |

| 6 | -17% |

| 7 | -29% |

| 8 | -37% |

| 9 | -44% |

| 10 | -50% |

QS 5 is the neutral point. Below it, you pay a progressively steeper surcharge for the same position. Above it, you receive a progressively larger discount. Fixing a keyword from QS 3 to QS 6 moves it from paying +67% above baseline to paying -17%: that’s an 84-percentage-point CPC improvement on that keyword.

The three components and what actually moves them

Quality Score has three components. Adalysis’s research estimates their weights at approximately 39% for Expected CTR, 39% for Landing Page Experience, and 22% for Ad Relevance. These aren’t Google-official figures, but they align with what I observe in real accounts when I look at which component’s improvement moves the needle most.

Expected click-through rate (~39%)

Expected CTR is Google’s prediction of how likely your ad is to be clicked, given the keyword and position, relative to other ads competing on the same keyword. It’s not a measure of your own historical CTR. It’s a comparison against every other advertiser showing up for that keyword.

What moves it: ad copy that matches search intent precisely. A searcher typing “emergency boiler repair London” and seeing “Heating Services. Get a Quote Today.” will not click at the same rate as “Emergency Boiler Repair in London. Same-Day Engineers Available.” The second ad speaks to what the person actually wants. Testing headline combinations in Responsive Search Ads matters here, but only when you’re rotating genuinely distinct value propositions, not minor variations of the same generic copy. A full approach to writing high-CTR ad copy is covered in the PPC ad copy guide.

What doesn’t move it: changing bids, adjusting match types, or restructuring campaigns. I’ve seen advertisers change keyword match types from broad to exact assuming it would improve CTR signals. It doesn’t work that way. The creative is the lever.

Ad Relevance (~22%)

Ad Relevance measures how closely your ad copy matches the intent behind the search query. Ad group structure has a direct impact here.

The common mistake is building broad ad groups where a single Responsive Search Ad covers 20, 30, sometimes 50 different keyword intents. The RSA tries to be relevant to everything and ends up specific to nothing. Ad Relevance suffers. The fix is tighter theming: fewer keywords per ad group, copy written for the specific intent of those keywords, and the primary keyword appearing in at least one headline.

Intent match matters more than keyword presence. A searcher looking for “best CRM for small business” wants reassurance the product suits their size and budget. “CRM for Small Businesses. No Setup Costs.” addresses that intent. “Best CRM Software. Try Free.” doesn’t, even if it contains words from the query. Dynamic Keyword Insertion doesn’t solve this either: automatically inserting a search term is not the same as writing an ad that addresses what the searcher actually wants.

Landing Page Experience (~39%)

Landing Page Experience is Google’s assessment of how relevant, transparent, and useful your landing page is for someone who clicked your ad. It carries the same estimated weight as Expected CTR. It’s the component most advertisers underinvest in, partly because fixing it requires more effort than rewriting a headline, and partly because it feels like a CRO problem rather than a PPC problem. In 2026, that distinction no longer holds.

What moves it: page content that matches ad copy and keyword intent, fast load times, mobile optimization, and no deceptive content or disruptive interstitials. If your ad promises “same-day delivery” and the landing page says “ships in 3-5 business days,” Google’s crawlers pick up that mismatch. So does the user, which is why this component correlates with bounce rate even when Google doesn’t use bounce rate directly.

Here’s what content mismatch looks like in practice. A solicitors firm runs a Search campaign targeting “employment tribunal solicitor London.” The ad mentions no-win-no-fee representation and a free initial consultation. The landing page links to the firm’s general legal services page: a list of practice areas, brief partner bios, and a contact form buried near the footer. The words “employment tribunal” appear once, in a sidebar. There’s no mention of no-win-no-fee. Google’s crawler reads this and can’t find the content the ad promised. Landing Page Experience scores “Below Average.” The user bounces within seconds for the same reason. The fix is a dedicated page for employment tribunal enquiries that opens with the no-win-no-fee offer, explains the process clearly, and places the contact form in the first screen.

Landing Page Experience is growing in importance because of AI Max. Google’s AI-driven campaign features evaluate landing page content more deeply to determine ad placement and relevance. The page is no longer just the destination. It’s part of the targeting signal. If you’re not running regular landing page audits alongside your analytics reviews, you’re missing something that compounds over time.

In account audits, Landing Page Experience is the component that generates the most quick wins. Advertisers invest heavily in ad copy refinement and keyword structuring, then send everyone to the same generic homepage. A dedicated landing page for the campaign’s top keyword theme, written to match the ad’s promise, typically moves Landing Page Experience from “Below Average” to “Average” or “Above Average” within 3-4 weeks of sufficient impression data. The CPC reduction from that single change often pays for the landing page development cost within the first month.

Quality Score in automated campaigns: Smart Bidding and Performance Max

Smart Bidding

The argument goes: Smart Bidding uses machine learning to optimize bids in real time, so quality signals are handled dynamically. The 1-10 Quality Score becomes a lag indicator of something the system already handles. Stop worrying about it.

This argument is half right and entirely misleading in practice.

Smart Bidding does use a richer, real-time version of the same quality signals that feed the 1-10 score. But those underlying signals (ad copy relevance, landing page quality, expected engagement) matter more in a Smart Bidding account, not less. Smart Bidding amplifies differences. If your landing page converts at 4% and a competitor’s converts at 9%, Smart Bidding learns this faster than a manual bidding account would and adjusts accordingly. Poor quality doesn’t get smoothed out by automation. It gets penalized more efficiently.

The conversion volume problem makes this particularly damaging. Google’s guidance recommends roughly 30 conversions per month for Target CPA campaigns and 50 for Target ROAS before the algorithm bids confidently. A landing page with poor experience that converts at 1.5% instead of a reasonable 5% systematically starves the algorithm during its learning phase. 200 clicks per week at 1.5% produces 3 conversions per week. At 3 per week, you need 10+ weeks to accumulate sufficient data. During those weeks, the system bids conservatively and underdelivers. By the time you investigate, the account looks like a bidding problem. It’s a landing page problem.

Performance Max

Performance Max has no keyword-level Quality Score. PMax doesn’t use keywords in the traditional sense, so there’s no keyword to attach a score to.

The equivalent quality signal in PMax is the asset group rating: Low, Good, or Best. Google evaluates the quality and diversity of your headlines, descriptions, images, and videos within each asset group, along with the relevance of those assets to audience signals and the final URL. A “Low” rating functions like a poor Quality Score in Search: it limits reach and increases effective costs. A “Best” rating signals the creative inputs are strong enough for the algorithm to work with.

The two campaign types often compete for the same users. If your Search quality is poor, you lose the inventory where intent signals are strongest. Don’t use PMax as a reason to deprioritize quality work on Search campaigns.

Industry benchmarks: what a good Quality Score actually looks like

According to WordStream’s analysis of 15,666 Google Ads accounts in 2025, the average Quality Score across accounts sits at 5-6 out of 10. Anything at 7 or above puts you ahead of the majority of advertisers in almost every industry.

| Industry | Average Quality Score |

|---|---|

| Apparel, Fashion & Jewelry | 7.36 |

| Shopping, Collectibles & Gifts | 6.90 |

| Finance & Insurance | 5.72 |

| Education & Instruction | 5.35 |

| Home & Home Improvement | 5.33 |

| Attorneys & Legal Services | 5.02 |

| Physicians & Surgeons | 4.95 |

| Dentists & Dental Services | 4.84 |

Source: WordStream Google Ads Account Study, 15,666 accounts, 2025.

Dentists, lawyers, and physicians score lowest for predictable reasons: competitive queries, compliance-constrained ad copy, and landing pages that underperform on mobile and load speed. Apparel and shopping score highest because product-level keyword targeting creates natural tightness between keyword, ad, and landing page.

A Quality Score of 7 is genuinely good. A 10 is achievable on branded or highly specific keywords, but chasing it on competitive head terms is rarely worth the investment. LeadGen Economy’s analysis models that improving from QS 5 to QS 8 on lead generation keywords reduces cost per lead by approximately 27%, based on the CPC discount at each score level with conversion rate held constant. That’s a modeled estimate, but it’s grounded in the CPC table above: a QS 8 pays roughly 37% less per click than a QS 5.

A practical Quality Score diagnostic: run this on your account today

This is the workflow I use when auditing accounts.

Start by filtering your keywords to show only those with a Quality Score below 5. Apply a secondary filter for impression volume: keywords with under a few hundred impressions in the last 30 days can be deprioritized. Low-impression keywords with poor Quality Scores rarely have enough data to act on.

For each keyword in that filtered list, look at which component is marked “Below Average.” Google shows this in the keyword columns panel (add the Quality Score component columns if they’re not visible). The below-average flag tells you exactly where the problem is.

If Expected CTR is below average, the issue is your ad copy relative to competitors. Review what else is showing for that keyword using Google’s ad preview tool. Are your headlines specific to the intent? Are you leading with a generic CTA or something that differentiates you? Test new headline combinations and allow enough impressions before drawing conclusions. For detailed guidance, the PPC ad copy guide covers the specific techniques that move Expected CTR.

If Ad Relevance is below average, the issue is usually structural. Is this keyword in an ad group with too many others pulling in different directions? Is the RSA serving too many intents at once? The fix is to tighten the ad group by removing mismatched keywords or write copy more specifically aligned to this keyword’s intent, including the keyword itself in at least one headline.

If Landing Page Experience is below average, run the page through Google’s PageSpeed Insights and check the mobile score. Then verify whether the page content actually delivers on what the ad promises. If someone searches for a specific product or service and the landing page opens on a generic homepage, that’s your problem. The fix is a dedicated landing page, not a homepage redirect. A broader Google Analytics audit often surfaces landing page quality issues in engagement metrics well before they appear in Quality Score.

After making changes, allow two to four weeks before drawing conclusions. Quality Score updates as new impression data accumulates, and meaningful movement on a keyword with moderate traffic typically takes that long to stabilize.

Prioritize ruthlessly. A keyword with a Quality Score of 3 and five impressions a month can wait. One driving 20% of your spend at QS 4 is where you start.

Most advertisers who tell me “Quality Score doesn’t matter anymore” have never systematically filtered for low-QS, high-spend keywords and measured the CPC impact. The claim usually comes from accounts where Quality Score averages 6-7 across the board, so the variation isn’t large enough to notice. The accounts where it matters most are the ones with tight budgets and high competition — exactly the accounts that can least afford to overpay. A £5 CPC reduction on a keyword driving 500 clicks per month is £2,500 per month in recovered budget. That’s not marginal.

The Score Follows the Work

Quality Score reflects the quality of three things: your ad copy, your ad group structure, and your landing page. Fix those and the score follows. Optimize the score directly without fixing the underlying issues and you’re measuring the wrong thing.

In a Smart Bidding environment, the signals that feed Quality Score are the same signals that determine how well your algorithm learns. Getting ad relevance right, landing page experience right, and click-through rates up: these aren’t Quality Score optimization tasks. They’re the work of building a Google Ads account that functions. Understanding the full ecosystem, including how Performance Max interacts with Search campaigns and how attribution models affect how you read your conversion data, helps you see Quality Score as one signal within a larger system.

Frequently asked questions

Does Quality Score still matter with Smart Bidding?

Yes, but differently. Smart Bidding uses richer real-time quality signals, not the 1-10 diagnostic score directly. However, the same underlying factors (ad relevance, landing page quality, expected CTR) that produce a good Quality Score also produce better Smart Bidding performance. According to Google Ads Help documentation, landing page quality directly affects conversion rates, which are the primary input for all Smart Bidding strategies.

What’s the fastest way to improve a low Quality Score?

Check which component is flagged “Below Average” in your keyword columns. Landing Page Experience is often the highest-impact fix: creating a dedicated landing page that matches the ad’s promise moves the score within 3-4 weeks of sufficient data. According to Adalysis research, landing pages carry approximately 39% of the Quality Score weight, making them the highest-leverage component for most underperforming accounts.

Is a Quality Score of 10 worth chasing?

Rarely. WordStream’s 2025 account study shows average Quality Scores of 5-6, meaning a 7 already outperforms the majority. A 10 is achievable on branded terms and highly specific long-tail keywords, but the incremental CPC discount from 9 to 10 (moving from -44% to -50% vs. baseline) is small relative to the effort required on competitive keywords.

How does ad group structure affect Quality Score?

Tightly themed ad groups, with fewer keywords per group and ad copy written for the specific intent of those keywords, produce higher Ad Relevance scores. According to Google’s account structure best practices, the RSA system rewards copy that closely matches the specific intent of each keyword group. Broad ad groups force the RSA to try to serve too many intents simultaneously, which dilutes relevance.

Can Quality Score affect Performance Max campaigns?

Performance Max doesn’t use keyword-level Quality Score. The equivalent signal is the asset group rating (Low, Good, Best), which Google evaluates based on creative quality, diversity, and relevance to audience signals and the final URL. A “Low” asset group rating in PMax functions similarly to a poor Quality Score in Search: it limits reach and increases effective cost per result.

What single action improves Quality Score fastest?

The highest-impact action in the shortest time is improving Landing Page Experience: make sure the page delivers exactly what the ad promises, loads in under 2.5 seconds on mobile, and has the primary CTA visible without scrolling. Creating a dedicated landing page per ad group theme, rather than sending all traffic to the homepage, can move Quality Score from 4 to 7 in two to three weeks in accounts with sufficient traffic volume. On the keyword side, tightening keyword match types to align search intent more precisely with ad copy is the fastest lever for improving both Ad Relevance and Expected CTR simultaneously.

Sources

- About Quality Score for Search Campaigns — Google Ads Help

- Google Ads Account Study (15,666 accounts) — WordStream

- Google Ads Benchmarks 2025 — WordStream

- Google Ads Quality Score: The Ultimate Guide — Adalysis

- Quality Score Impact on Lead CPL — LeadGen Economy

- Smart Bidding — Google Ads Help

- PageSpeed Insights — Google

Could your ad campaigns

perform better?

30 minutes to review your situation and tell you exactly what I would change. No pitch, no sales proposal.