Heuristic Analysis in CRO: A Step-by-Step Expert Guide

Heuristic analysis finds conversion friction without traffic. How it differs from A/B testing and a step-by-step process you can run today.

In this article

Heuristic analysis is one of the fastest diagnostic tools available in CRO, and one of the most misunderstood. Most teams either confuse it with A/B testing or treat it as an informal browse-around with an opinion attached. Neither approach captures what a proper heuristic evaluation actually delivers. According to Nielsen Norman Group, a well-run heuristic evaluation can uncover approximately 75% of a product’s most serious usability problems without a single user test session. That’s a substantial return on a structured 2-3 day process. For broader context on the CRO discipline, see my guide to CRO for ecommerce.

Key takeaways

- A structured heuristic evaluation uncovers approximately 75% of a product’s most serious usability problems without requiring any user testing (Nielsen Norman Group, 2000).

- Three evaluators find roughly 60% of usability problems; five evaluators approach 75%. Using just one reviewer misses the majority (Nielsen Norman Group, 2000).

- The average documented cart abandonment rate is 70.19% (Baymard Institute, 2024), heuristic analysis of the checkout flow typically reveals 8-12 distinct friction points per site.

- Sites with fewer than 1,000 monthly conversions can’t run valid A/B tests. Heuristic analysis works regardless of traffic volume.

- The ICE score (Impact x Confidence x Ease) is the fastest defensible prioritization framework for converting findings into an action plan.

What exactly is heuristic analysis in CRO?

Heuristic analysis in CRO is a structured expert review of a website conducted against established usability and persuasion principles, without involving real users. Nielsen Norman Group defines it as an evaluation method where experts examine an interface against a checklist of recognized usability heuristics. The result is a list of identified friction points with evidence and recommendations, not statistical proof.

This distinction matters. Heuristic analysis generates hypotheses. A/B testing validates them. You need both, in sequence.

It’s not A/B testing. It produces no statistical significance and no declared “winner.” It tells you why something is likely causing friction and what to fix. Think of it as diagnostic reasoning, not experimentation. The difference in application: heuristic analysis answers “what’s probably broken and why,” while A/B testing answers “which version actually converts better.”

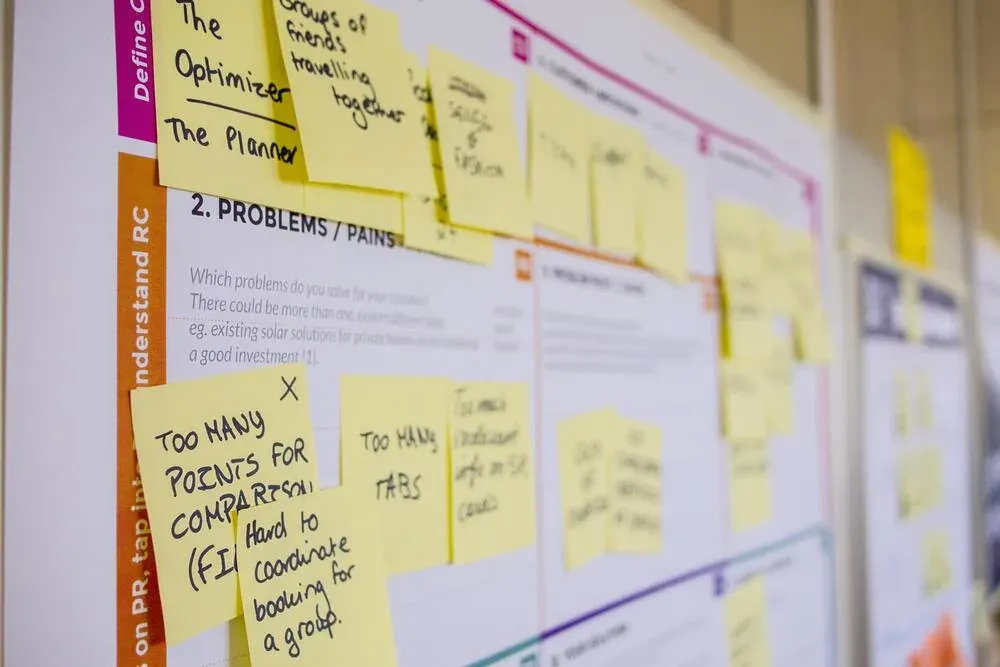

It’s also not a casual walkthrough. Effective evaluation requires predefined criteria, multiple reviewers, a severity scale, and documented findings with evidence attached to each one.

The LIFT model: five dimensions that drive or kill conversion

The LIFT model, developed by WiderFunnel and widely adopted in the CRO industry, gives evaluators a coherent framework for assessing every page element. Your Value Proposition sits at the center. Four other factors either lift or suppress conversion around it.

Value Proposition is the foundation. Before assessing anything else, ask: does this page immediately communicate why a visitor should buy here rather than elsewhere? If the answer is unclear within five seconds, every other improvement is building on sand.

Relevance tests whether the page matches what brought the visitor there. A Google Ads click on “buy running shoes size 10” landing on a generic footwear homepage fails the relevance test regardless of design quality. Baymard Institute research across 50,000+ tested user sessions consistently identifies message mismatch as a top cause of ecommerce abandonment.

Clarity covers visual hierarchy, copy readability, and the ease of understanding what to do next. Unclear primary CTAs and ambiguous product information rank among the top causes of abandonment in Baymard’s research.

Anxiety surfaces trust and risk concerns. Missing security badges, unclear return policies, no visible contact information, and anonymous brand presentation all generate hesitation at the decision moment. Baymard Institute estimates that 19% of cart abandonment is directly linked to payment security concerns.

Distraction captures anything pulling attention from the primary conversion goal. Pop-ups timed too early, auto-playing video, excessive navigation choices, and competing CTAs all dilute focus precisely when a visitor should be moving toward a decision.

In heuristic audits conducted across Spanish ecommerce clients, anxiety-related issues appear in every site scoring below 60/100 on structured evaluation frameworks. They’re also typically the fastest to fix: a trust badge row, a clearly worded return policy, and a visible phone number can be implemented in a few hours. The conversion impact of these small changes is consistently underestimated by development teams who don’t experience the purchasing hesitation a first-time buyer feels.

Peter Morville’s Honeycomb: a complementary lens

The LIFT model focuses on persuasion and conversion barriers. Peter Morville’s User Experience Honeycomb, introduced in a 2004 article for Semantic Studios, evaluates quality across seven dimensions: useful, usable, desirable, findable, accessible, credible, and valuable.

In CRO practice, the honeycomb works best as a secondary framework. Run a LIFT-first pass to catch immediate conversion blockers. Then apply the honeycomb to identify structural quality gaps that affect satisfaction and repeat visits.

Useful asks whether the product or service solves a real problem. Usable covers interaction ergonomics: does the interface work without friction? Desirable is about emotional resonance: do the visual design, brand voice, and photography create something people want to associate with?

Findable applies to site search and information architecture. If a user can’t locate a product, a returns policy, or a contact option, that’s a conversion blocker regardless of how well everything else performs. Accessible covers WCAG compliance and inclusive design, which also affects organic search rankings. Credible overlaps directly with LIFT’s Anxiety dimension. Valuable integrates all six: does the experience deliver enough value to justify the effort of purchasing?

Running both frameworks in sequence, LIFT first then the honeycomb, consistently produces a more complete finding set than either alone. LIFT catches high-urgency conversion blockers. The honeycomb catches structural quality issues that compound over time. The checkout conversion problem you’re trying to solve today often has roots in a findability or credibility issue that the honeycomb surfaces before it manifests in abandonment data.

How to run a heuristic evaluation: step by step

Nielsen Norman Group research shows that a single evaluator finds roughly 35% of usability problems, three evaluators find around 60%, and five approach 75%, with diminishing returns beyond five. For ecommerce audits where checkout friction is the primary concern, three reviewers working independently before consolidating findings is the most cost-effective configuration (Nielsen Norman Group, 2000).

Define scope and assign evaluators. Decide which pages to review. For ecommerce, the minimum scope covers: homepage, at least one category page, at least one product page, the cart, and the full checkout flow. Mobile must be evaluated separately from desktop, using a real device. Assign 3 to 5 reviewers — Nielsen Norman Group research shows one evaluator finds roughly 35% of usability problems, three find around 60%, and five approach 75%. Beyond five, diminishing returns set in rapidly.

Brief evaluators independently. Each reviewer completes their evaluation independently before group discussion. Sharing observations too early creates anchoring bias: evaluators stop looking once they’ve heard a colleague’s finding. Provide a common evaluation criteria document, then work separately first.

Conduct the review. Walk through each page using the LIFT model as your primary lens. For each section, ask: does this element support the value proposition? Is it clear? Does it create anxiety? Is it a distraction? Note every finding immediately — the page, the specific element, the observed issue, and your initial evidence. Take annotated screenshots for every significant finding. These become your evidence layer when writing up recommendations.

Consolidate and deduplicate. After independent reviews, hold a consolidation session. Combine findings, remove duplicates, and flag disagreements. Where reviewers disagree on severity or on whether an issue exists, that’s a useful signal: revisit the element with fresh eyes or flag it for user testing.

For methods that complement expert review with real user data, see the guide to surveys for CRO.

What to evaluate on each page type

Different page types present different conversion challenges. Focus your evaluation time according to the template.

Homepage: Value proposition clarity within five seconds, visual hierarchy, primary navigation simplicity, trust signals (logos, certifications, review counts), hero image relevance, and the primary CTA’s visibility and contrast.

Category pages: Filter and sort functionality, product card information hierarchy (image quality, name, price, key attributes), pagination vs. infinite scroll trade-offs, and breadcrumb navigation.

Product pages: This is where most ecommerce conversions are won or lost. Baymard Institute identifies product page issues as the single largest category of ecommerce usability failures. Evaluate: image quality and zoom capability, description completeness, shipping information visibility, review quantity and authenticity, return policy prominence, and add-to-cart button position and contrast ratio.

Checkout: Baymard Institute’s 2024 checkout benchmark sets the average documented cart abandonment rate at 70.19%. The most common causes are forced account creation, unexpected shipping costs, and excessively long forms. The checkout optimization guide covers 20 specific fixes derived from Baymard’s research.

Mobile: Test on an actual device, not browser responsive mode. Check tap target sizes (minimum 44x44px per Apple HIG and Google Material Design guidelines), form input types (numeric keyboards for phone and postal code fields), and horizontal scroll issues that emulated views often miss.

How to document findings: Severity, Evidence, Recommendation

Every finding needs three components: a severity rating, evidence, and a specific recommendation. Without all three, findings accumulate in a spreadsheet and nothing gets built.

Severity scale:

- Critical: Prevents conversion. Must be fixed immediately. Example: checkout button broken on mobile iOS Safari.

- High: Significantly reduces conversion probability. Example: no return policy visible anywhere on product pages.

- Medium: Creates friction reducing conversion rate. Example: CTA button color has insufficient contrast against the background.

- Low: Minor UX issue with limited direct conversion impact. Example: footer links open in the same tab rather than a new one.

Evidence means a screenshot, an analytics data point, or a reference to a supporting study. “I think the CTA is unclear” is an opinion. “CTA button (#e8e8e8 on #ffffff) fails WCAG AA contrast ratio of 4.5:1, confirmed via Contrast Checker” is an evidenced finding.

Recommendations must be specific and testable. “Improve the CTA” is not actionable. “Change the primary CTA from grey (#e8e8e8) to a high-contrast brand color and validate with an A/B test against the current version” is.

Common heuristic findings in ecommerce

Certain patterns appear in nearly every ecommerce heuristic audit. Knowing them helps you allocate review time to the highest-probability friction zones.

Unclear primary CTAs are the most common finding. Multiple competing calls to action on a single page, or CTAs that fail the “five-metre test” (readable from five metres away), appear in the majority of sites I’ve reviewed. CXL Institute research confirms CTA clarity as one of the highest-impact, lowest-effort improvements available.

Trust signal gaps appear consistently on product and checkout pages. Missing SSL indicators, no visible return policy, absent phone number or live chat, and generic-feeling testimonials all create anxiety at decision moments.

Mobile friction remains widespread. Tap targets smaller than 44px, form fields requiring pinch-to-zoom, non-native input keyboards for numeric fields, and checkout address forms that don’t trigger autocomplete are common across ecommerce sites that were designed desktop-first.

Information architecture failures include product descriptions that bury the key benefit in the third paragraph, specifications hidden under collapsed tabs, and shipping costs absent from product pages and revealed only at checkout, which Baymard Institute identifies as the leading individual cause of cart abandonment.

In a typical ecommerce heuristic audit, the checkout flow alone usually produces 8 to 12 distinct friction points. That’s where I start when time is limited. Even a 30-minute structured review of the checkout against LIFT criteria typically surfaces 3-4 high-priority findings that a team of developers and designers missed because they’re too familiar with the interface to see it as a first-time buyer does.

How to prioritize findings with the ICE score

A heuristic evaluation of a mid-size ecommerce site might produce 40 to 80 findings. The ICE score gives you a fast, defensible framework for deciding what to build first.

ICE stands for: Impact x Confidence x Ease. Each dimension scores 1 to 10. The three scores multiply together for a composite out of 1,000.

Impact: How much will fixing this improve conversion? A broken checkout button on mobile scores 10. A missing breadcrumb in a rarely visited category page scores 2.

Confidence: How certain are you this is causing friction? A finding supported by analytics data and confirmed by three independent evaluators scores 9 or 10. A finding based on one reviewer’s instinct, with no supporting data, scores 3 or 4.

Ease: How easy is this to implement? A copy change scores 9. A full checkout flow redesign scores 2.

Sort findings by ICE score, descending. Start at the top. Re-evaluate the list after each sprint, incorporating new data from analytics or test results. The list is a living document, not a fixed backlog.

Frequently asked questions

How is heuristic analysis different from usability testing?

Heuristic analysis is expert-led, not user-led. Evaluators review the interface against established principles without involving real users. Usability testing observes real users completing real tasks. Heuristic analysis is faster and cheaper. Usability testing generates direct behavioral evidence. Nielsen Norman Group recommends using both within a research program rather than choosing one over the other.

How long does a heuristic analysis take?

A focused ecommerce audit covering five core page types takes one experienced evaluator 4 to 6 hours. Add consolidation time across multiple reviewers and documentation, and a full team evaluation runs 2 to 3 days. The output is a prioritized findings report that directly informs your testing roadmap. For the full CRO workflow from audit through testing, see CRO for ecommerce.

Can you run heuristic analysis on a low-traffic site?

Yes. This is one of heuristic analysis’s strongest applications. Sites with fewer than 1,000 monthly conversions typically can’t generate statistically valid A/B test results in a reasonable timeframe. Heuristic analysis requires no traffic threshold. It identifies friction based on established principles, not sample sizes. See CRO with low traffic: tactics that work without A/B tests for a full qualitative-first approach.

What’s the difference between a heuristic evaluation and a CRO audit?

A heuristic evaluation is one method within a CRO audit. A full CRO audit typically combines heuristic analysis, analytics review, user survey data, session recording analysis, and technical performance assessment. Heuristic analysis contributes the expert UX review component. The combined methodology gives you both the “what” (analytics) and the “why” (heuristic and qualitative methods).

How often should you run a heuristic evaluation?

After significant site changes (new theme, redesigned checkout, new product category), after seasonal performance reviews, and when analytics show conversion rate degradation without an obvious cause. For actively growing ecommerce sites, a quarterly heuristic review keeps friction from accumulating unnoticed. Nielsen Norman Group recommends treating heuristic evaluation as a recurring practice, not a one-time diagnostic: interfaces accumulate friction as features are added without a systematic review.

Sources

- Heuristic Evaluation: How-To — Nielsen Norman Group

- How Many Test Users in a Usability Study — Nielsen Norman Group

- 10 Usability Heuristics for User Interface Design — Nielsen Norman Group

- The LIFT Model — WiderFunnel

- Ecommerce Checkout Usability Research — Baymard Institute

- Cart Abandonment Rate Statistics — Baymard Institute

- User Experience Design — Peter Morville, Semantic Studios

- Call to Action Best Practices — CXL Institute

Could your ad campaigns

perform better?

30 minutes to review your situation and tell you exactly what I would change. No pitch, no sales proposal.